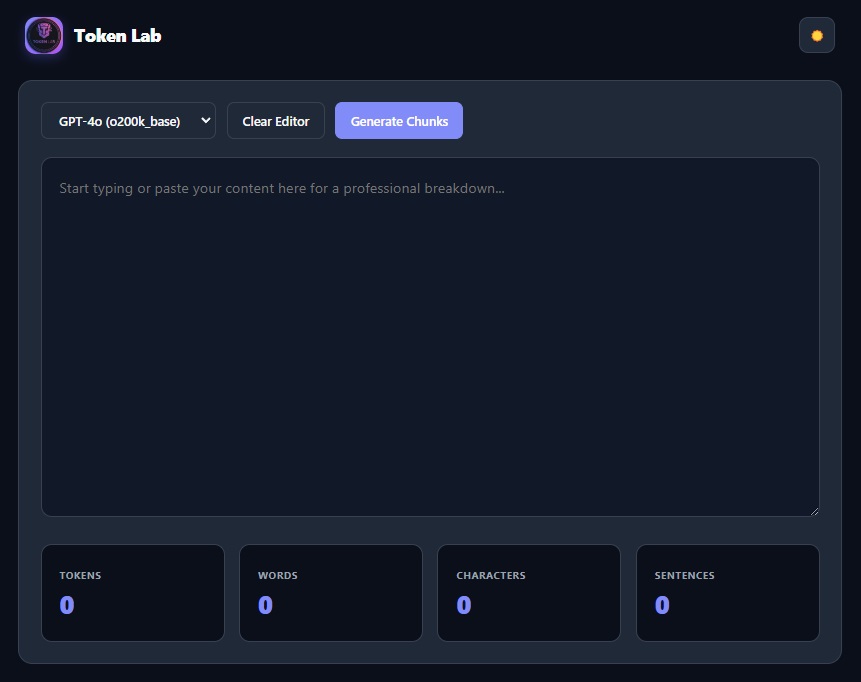

Token Lab: Token Counter and Analysis

17/01/2026 • 00:01:00

Project Reference

This article relates to a project in our portfolio.

Introduction:

Token Lab calculates the number of tokens in a given piece of text.

It's useful when working with AI models that have token limits or usage-based pricing.

🚀 How to Use

- Paste or input your text.

- Select an algorithm from the dropdown (calculation differs between AI models)

- Calculate the number of tokens!

Other Features

Beyond simple counting, Token Lab analyses:

- Reading & Speaking Time — Essential for scriptwriters and content creators

- Lexical Density — A measure of how information-dense your text is

- Keyword Frequency — Identifies recurring patterns and keywords

Limitations

- Token counts may vary slightly between different models or tokenizers.

- This tool provides an estimate, not a guarantee of exact usage in every system.

What Is a Token?

Tokens are the basic units of text that language models process, and they are not always the same as words or characters.

A token can be:

- A whole word

- Part of a word

- A punctuation mark or symbol

For example:

"hello"→ 1 token"tokenization"→ may be split into multiple tokens"Hello, world!"→ includes tokens for words and punctuation

Because of this, character count or word count alone is not always accurate for estimating model usage.

🔍 Why Tokenization Matters

When working with large language models (LLMs), it’s easy to think in terms of words, sentences, or characters. But under the hood, models don’t process text that way — they operate on tokens.

Tokenization is the process of breaking text into these smaller units that a model can understand and process. Depending on the tokenizer used, a token might be:

- A whole word (

"hello") - Part of a word (

"un"+"believable") - A number or symbol (

"42","@") - Whitespace or punctuation

For example, the sentence:

"Tokenization matters."

might be split into tokens like:

"Token", "ization", " matters", "."

Tokens are the fundamental units of text for LLMs, not characters or words.

A general rule of thumb is that 1,000 tokens ≈ 750 words, but actual counts vary significantly between algorithms (e.g., OpenAI's o200k_base vs. cl100k_base).

Token Lab eliminates the guesswork by providing deterministic counts for:

- GPT-4o (

o200k_base) — High-density tokenizer - GPT-4 Turbo — Standardized enterprise tokenization

- Llama 3 — Optimized for open-source model architectures

- Standard Metrics — Character, word, and sentence counts

Summary

Token Lab offers a fast, lightweight way to understand how much text you’re sending to a model. It’s ideal for prompt design, experimentation, and general awareness of token usage.

Project Reference

This article relates to a project in our portfolio.